Intelligent Hardware

It’s nearly impossible for Wall Street to assess the degree to which AI, more specifically agentic AI, will have...

In the event an AI has become sentient, can the sentient AI file for a copyright or a patent? Can a sentient AI be granted rights akin to those that we have as humans under the law?

It has been another wild week in the world of artificial intelligence.

Anthropic has been working on its most powerful artificial intelligence, which is now known as its Mythos model, which is reportedly extremely good at identifying weaknesses and vulnerabilities in information technology systems.

The threat of the technology being used by bad actors – or by Anthropic itself, which has proven to be the most political of the frontier AI model companies – has become a national security issue.

So serious that Treasury Secretary Scott Bessent and Fed Chair Powell called an emergency meeting with CEOs of the top U.S. banks over these risks. After all, if Anthropic has this level of technology, China may very well have similar capabilities as well. The entire financial system could be at risk.

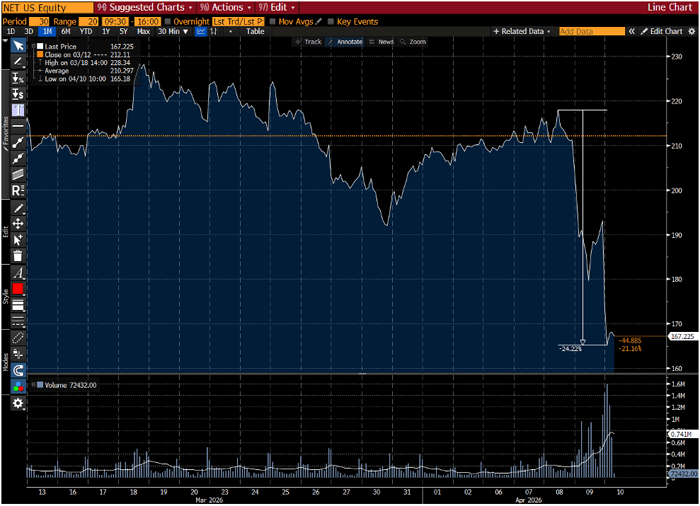

The news wasn’t kind to cybersecurity companies, which is somewhat ironic. Cloudflare (NET) has collapsed 24% in the span of 48 hours.

1-Month Chart of Cloudflare (NET)

If anything, AI-enabled cybersecurity companies are more critically important than they have ever been before. And they are going to have to innovate at a pace faster than any previous time in history to keep up with the advancements in artificial intelligence.

And standing in contrast to the cybersecurity stocks was Taiwan Semiconductor Manufacturing (TSM) this week, which announced a stunning 35% year-on-year growth in demand for AI semiconductors. Not surprisingly, this massive company’s stock is up 18% in the last 24 hours.

Stocks that are all part of the same trend, artificial intelligence, some are going straight up, and some are going straight down. Perfect examples of the disruptive impact of technology.

Here’s to a peaceful, less volatile weekend,

Jeff

Jeff Brown,

I have been doing a lot of research lately using Grok and Claude, and I recently finished editing a report generated by Claude. I wondered if I should copyright it.

What did I do? I asked Claude about that, and he said the Thaler v Pearlmutter case denied Thaler the copyright because the AI created the report. Thaler was also denied a patent for the same reason in Thaler v Vidal because an AI had created the design.

What’s going to happen to all the drug companies that are discovering new drugs via AI? Will those drugs be patentable? Where is the legal side of AI assisting the world in all sorts of endeavors going to wind up on the rights of intellectual property (IP) created by AIs?

– Richard C.

Hi Richard,

This is going to become an interesting and hotly debated topic in the near future.

But at the moment, there is a clear understanding of what can be copyrighted and patented with regard to the utilization of artificial intelligence.

In the two legal cases you mention – Thaler v. Pearlmutter and Thaler v. Vidal – the decision was that the AI could not be listed as the sole author or the inventor.

What was different in these cases is that the image Stephen Thaler tried to copyright in Thaler v. Pearlmutter was entirely made by an AI, with no human input. Similarly, in Thaler v. Vidal, Thaler submitted two inventions that were “created” by an AI with no human input.

The U.S. Copyright Office has made it clear that a single prompt into a generative AI model doesn’t constitute sufficient human authorship and thus cannot be copyrighted. If you’re interested in digging deeper, you can go here to see the U.S. Copyright Office’s Report on Copyright and Artificial Intelligence.

With that said, in the example that you used with your own works, you certainly could copyright that work. The act of editing, arranging, modifying, or adding human contributions to something that was initially created by a generative AI is considered to be a meaningful human contribution, and therefore, it can be copyrighted.

The same is true regarding patents, in the example that you used with drug companies. Using AI models to sift through thousands or even millions of potential drug targets to create a short list of candidates does not lead to a final product. It is part of the process that requires a lot of human-led management, testing, analysis, and effort.

So, in short, yes, drug companies that leverage AI to accelerate research and development will absolutely be able to patent their inventions, as there will be meaningful human contributions to the drug selection, design, and process of developing that drug.

Off the top of my head, some great examples of AI-empowered drug development companies like Insilico Medicine (ISLMF), Exscientia (note: Recursion acquired Exscientia in 2024), and Recursion Pharmaceuticals (RXRX) have all patented drugs that have leveraged AI. And I know many others have done the same.

Where things will get really interesting is when there is a raging debate over whether an AI has become sentient, and if so, can the sentient AI file for a copyright or a patent? An even larger topic is whether a sentient AI would be granted rights akin to those that we have as humans under the law.

And in fact, this is the background behind Stephen Thaler’s legal cases. He is not a lawyer. He is a computer scientist. And he claims to have created an AI that is now sentient. His court cases are an effort to get the court to acknowledge his claim that he has created a new species on Earth and that they should have similar legal rights as humans.

He hasn’t, but that’s the game that he’s playing to gain attention and notoriety.

Sentience is a big topic for another day, but it won’t be too long before we’re having it.

Hello Jeff and the entire team,

I hope this message finds you well.

I’m writing to follow up on your recent analyses regarding the transformations brought about by artificial intelligence. I share your optimism about the long‑term potential for abundance generated by AI, robotics, and technological advances by 2030 and beyond. However, several aspects seem underestimated in the most optimistic narratives, particularly regarding working‑class communities and the lower middle class in the United States.

First, professional retraining into AI‑related or highly technical roles remains inaccessible for a large number of people, as these fields are simply too complex for many.

Second, regarding social assistance and individual responsibility: even for the small percentage of people who are genuinely resistant, the penalty already represents a minimum level that is far from easy to endure, without going to the extreme of eliminating all support – which, in my view, would be excessively harsh.

That would result in people ending up on the streets, with or without children – you can picture the situation. Would you really be proud of a state that decided to move in that direction? I don’t think so. Even when individuals show poor motivation, the punishment becomes inhumane.

Finally, in the longer term, in a world where software and physical AI will occupy the majority of jobs, the economy will only be able to adapt if appropriate mechanisms are put in place to redistribute the wealth generated and maintain sufficient demand.

Without this, the risk of economic and social chaos – marked by mass unemployment, collapsing consumption, and rising social tensions – becomes very high. The promised abundance will not automatically materialize for everyone if transitions are not managed in an inclusive way.

These points do not call into question the transformative potential of AI, but they highlight the need for a more nuanced and proactive approach, so that the benefits are not concentrated solely among those who are already well‑positioned educationally and professionally.

I would be very interested in your thoughts on these specific dimensions.

Thank you for your attention and for the quality of your publications.

– Cherif K.

Hello Cherif,

Thank you for the thoughtful question. It is a good framework for how to think about the challenge involved in this transition to a world that will leverage AI in every capacity.

One of the misperceptions about retraining of a workforce is that it implies that retraining will be required in highly technical fields, as you suggested. That’s actually not the case. Corporations will primarily be creating AI models for every discipline and industry, and workers will be trained on how to interface and use those models.

Anyone who can use a computer or smartphone today and has basic problem-solving skills will be able to inference with AI tools. In fact, it will be a lot easier to use these models than our current experience using computers today.

Another key point is that many of the jobs that will be in the highest demand are and will be in the trades. We need welders, concrete workers, electricians, plumbers, and many other niche, skilled laborers. These are all skills that can be taught.

Today, many of these skilled trades are earning more than college graduates. Opportunity will be everywhere as our economy grows at the fastest pace in history due to these radical technology-powered productivity boosts that have already begun.

Central governments can support this transition by putting in place pro-business, pro-economy regulations, as well as programs that support job retraining to fields/skills where there are labor needs. This can be done through tax incentives for both individuals and corporations, as well as small grants for education.

In the United States, there already exists a form of Universal Basic Income (UBI) in the form of the combination of welfare, disability payments, Medicaid, food stamps, EBT funds, etc. Fraud is absolutely rampant, as we have learned.

In the U.S. right now where are about 43.4 million men and women ages 16–64 (i.e., working age) who are not in the workforce for a variety of reasons. There are plenty of jobs, but too many how are either unwilling or unable to take them. And the current UBI system provides incentives for many to not work (i.e., not worth the effort to make a few extra dollars).

The real question is how to root out the trillions of dollars of fraud in the system right now. Just imagine the positive impact of eliminating the fraud and then putting a small portion of what was saved towards programs that help workers re-enter the workforce.

Progress is being made right now in the U.S., prosecuting those who have made millions in state-sponsored “virtuous” programs that were designed for hospice fraud, childcare fraud, healthcare fraud, Medicaid fraud, COVID fraud, and so on. Trillions of taxpayer dollars have been stolen.

My point is that the wherewithal and resources are there to positively affect this transition. The fraudulent programs have to stop, the rule of law must be enforced, freedom of speech must be protected, and there must be transparency in government programs to ensure funds are being used as they are intended.

AI, robotics, and automation will drive down the costs of goods and services, enabling a higher quality of life for an entire population. It will also create accelerated economic growth and opportunities for anyone willing to work.

The more philosophical question centers around what individuals will do to find purpose and meaning in life when they no longer have to work 40 or 50 hours a week. What percent of the population will continue to be motivated and productive versus those who choose not to be? And for those who choose to do as little as possible, what will they revert to? The prevailing line of thought is watching media, playing games, entertainment, and drugs/alcohol.

Education is critically important as part of the solution. And it is one of the areas that I know AGI will have a radically positive impact. AI tutors will have a nominal cost and be able to provide expert-level instruction on any topic to children, and even be able to tailor teaching style to the best way of learning for each student.

Just imagine the positive impact on society if the potential of each child could be maximized, and each individual could pursue the kind of career that they are most passionate about.

This is truly a battle between those who share my vision of an abundant and free future for all, and those who wish to decelerate, strangle progress, and use control to defraud hard-working taxpayers through state and national government programs.

You know which future I believe in…

Hi Jeff,

I really enjoy all your services as an unlimited subscriber and always look forward to receiving your daily Bleeding Edge publication, as well as reports from your other services I subscribe to.

Recently, I have seen an increase in companies featured as significant participants in the current technological AI/Data Center buildout, but are not being added to the portfolios of various services, and will not be tracked in future updates. The lack of future guidance is concerning to me, and, as a result, I will probably not participate in these opportunities.

Can you explain the reasoning for this type of feature/recommendation? I do not understand the purpose of featuring a company and then not recommending a buy or follow-up.

Best Regards.

– Wayne B.

Hi Wayne,

Thank you for writing in with the feedback.

Almost all of the companies/projects that my team and I research and publish research on enter into our model portfolios throughout my various research publications. Occasionally, I’ll publish research on a company that I’m excited about in a special report to highlight that company and a major growth trend that is happening.

In several cases over the years, we have formally moved some of those companies into our model portfolios, and I have on occasion published follow-up guidance on a company not formally in the portfolio.

A good example would be MP Materials (MP). I’ve published follow-up guidance on the company because I published the research in a special report for Near Future Report. It was a company that I wanted to highlight for my subscribers as a standout company for the opportunity in the report.

But it’s not the kind of company that I typically recommend in the Near Future Report, as it is more speculative, which is why it remained as a special report recommendation and not part of the formal model portfolio.

Occasionally, I’ll highlight a company in a special report and then move it into the model portfolio when I see a good entry point for the company.

My goal is simply to empower my subscribers to have the information needed to assess the company and determine if it is something that they are interested in investing in. This is always an individual decision based on everyone’s investment goals, financial planning, and interests.

This is why I am only able to provide general guidance and recommendations and am unable to provide any individual investment advice.

I do appreciate the feedback, and my team and I will give some more thought to this on how to best manage future guidance regarding companies in special reports.

Thanks again,

Jeff

P.S. Hi, Jeff’s managing editor here with some exciting news…

Over the past few months, Jeff and Brownstone Research senior crypto analyst Ben Lilly have been quietly building a new research newsletter dedicated to what’s really happening in the blockchain industry right now.

They’re calling it Chain of Thought… and it’s designed to bring Brownstone readers deeply informed insights on the institutional moves, data, and regulatory shifts that are quietly reshaping the global financial system forever.

Ben’s insights into the digital assets industry come from more than a decade of experience analyzing and investing in crypto and blockchain technology. Longtime Bleeding Edge readers may recognize him, as we’ve featured his work in these pages many times before.

If you’d like to start receiving Chain of Thought, you can go here to sign up for access now.

Read the latest insights from the world of high technology.

It’s nearly impossible for Wall Street to assess the degree to which AI, more specifically agentic AI, will have...

The Artemis II team has now traveled farther from Earth than any humans in history…

Here at Brownstone, we know how invaluable a strategy that lets us leverage momentum anywhere disruption strikes can be...

Thanks for signing up for The Bleeding Edge — we’re glad you’re here!

Want updates sent straight to your phone too? Sign up for SMS alerts to get the latest delivered via text.

Brownstone Research: By submitting your phone number, you agree to our SMS Terms & Conditions, Terms of Use, & Privacy Policy, and give express written consent to receive marketing text messages from BSR. Messages are recurring & frequency may vary. Reply STOP to 94703 to opt out. Reply HELP to 94703 for info. Consent is not a condition of purchase. Message and data rates may apply. For additional information, you may contact Customer Service at 888-512-0726 or smssupport@brownstoneresearch.com.