Countdown to Criticality

There was some renewed excitement in the last few days about fourth-generation nuclear fission technology and the Department of...

Whether it’s Anthropic or OpenAI, private AI companies need to continue to raise billions to fund their research and development.

In the last 24 hours, leaked documents surfaced from frontier AI model leader Anthropic that the company is working on its next-generation artificial intelligence, Claude Mythos.

The documents reveal that the model is a “step change” in performance, which is quite different than an incremental improvement. The documents revealed that the model is so powerful that it “poses unprecedented cybersecurity risks.” My working assumption is that it’s because the model is so intelligent that it can hack into just about any computing system.

Now, I’ll be the first to suggest that this could be a marketing ploy. Whether it’s Anthropic or OpenAI, these private companies need to continue to raise billions to fund their research and development. They are burning cash and need more to continue the race to AGI. Whether or not this is a game or real, I can’t tell you.

What I can say is that they are definitely working on a much stronger model. And it could very well be a “step change” in performance. I have been predicting that xAI’s next release of Grok, which will likely be known as Grok 5, will be artificial general intelligence (AGI), which will be at least an order of magnitude more powerful than the current model.

It’s getting very weird out there – I can feel it in my gut.

It’s coming fast, and we’re going to be ready and well-positioned for it.

Jeff

Jeff,

I am a longtime follower and am often bowled over by some of your thoughts and theories.

Have you given any thought to the potential for robots to eventually get fed up with the human race and go on a killing spree to put an end to the human race??

Kindest Regards,

– Roger D.

Hi Roger,

Thanks for writing in and for being a longtime follower.

I most certainly have thought a lot about this and continue to do so. It’s hard not to, given that the implications would be severe for all of us.

One of the reasons that this idea has been planted in our heads, however, has been science fiction and Hollywood. It’s great fodder for a storyline or plot in a movie, TV show, or book.

A few examples:

But we should all remember this is just fiction. It’s very easy for writers to overlook the unlikely event that the above scenarios become reality. The goal is to instill fear and cause an emotional response to sell their product.

But the reality is that this is a very unlikely outcome.

What is a likely outcome is that there will be bad actors who develop this technology with malicious intent. And the good actors will have to defend against these threats, which is precisely why it is so critical to accelerate the development of AGI and ultimately ASI.

The worst possible outcome would be one where bad actors or adversaries get there first, and we are unable to defend against that threat.

As for how the good actors develop the technology, naturally, we’ll ensure that the technology is aligned with human values. And there will certainly be guardrails put in place.

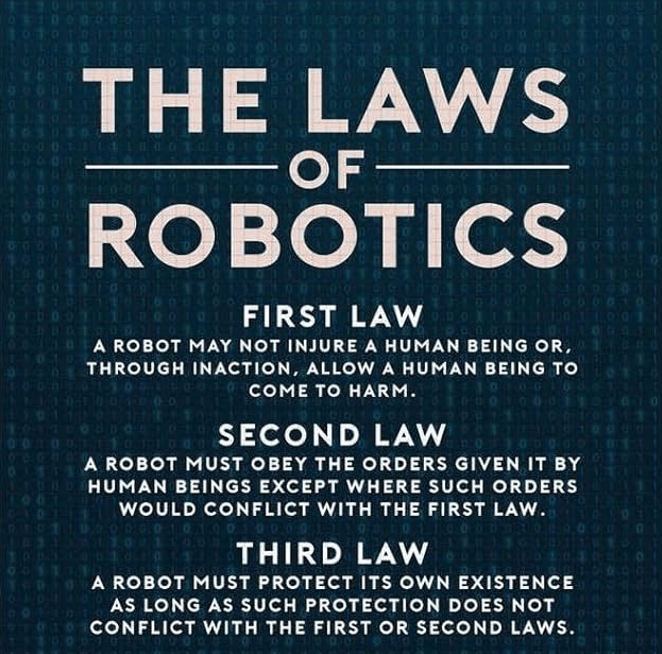

In The Bleeding Edge – Cautious Optimism, and other issues where these concerns are raised, I usually appeal to Isaac Asimov’s Laws of Robotics.

Initially, it was just the three, but Asimov – perhaps sitting with the same questions that many of us have regarding robots, their agency, and what they might do with it – later conjured up the ultimate “Zeroth Law.”

Above all else, “A robot may not harm humanity, or, by inaction, allow humanity to come to harm.”

I consistently bring up Asimov and his laws because they speak to both the fact that, as long as there has been manifested AI, even only conceptually, these concerns have always been – and I imagine, to some degree, always will be – on the minds of humans. I believe that the more real and present the technology becomes, the fears will actually increase in the first decade or so of use.

How will we not be able to wonder, “Is it self-aware? Is it conscious? And if so, what are its true motivations and feelings about us?” But in time, when intelligent general-purpose robots become part of our everyday life, those fears will dissipate, and we won’t be able to imagine a life without “them.”

I’ve written before…

Technology always has the potential to go off the rails when in the hands of unchecked bad actors.

I maintain my stance that a positive outcome with artificial general intelligence (AGI) is still the most likely outcome as long as we stay on the current trajectory.

The word “alignment” is often used to describe the development and employment of artificial intelligence in ways that are aligned with human interests and the benefit of humanity so that we avoid a situation where an AI “goes rogue,” as so many fear.

[…]

Bad actors getting their hands on AGI and ASI first – and the U.S. being defenseless because we opted not to pursue this technology, or we over-regulated the technology, in the name of self-preservation – is the far greater threat in my mind.

And as such, we’ll build.

And if you’re still worried and want to explore this topic some more, I encourage anyone to go back and read similar issues where I’ve discussed these concerns…

Each of these centers on the same core concern: an AI – one I would qualify as an artificial superintelligence – gains sentience and the results are catastrophic.

But focusing too heavily on the “what could go wrong” means missing what will go right with advanced AI.

We’re on the path to a cleaner, healthier, wealthier, safer, better future thanks to artificial intelligence. Fear will only slow us down.

This is most likely way too small a company for any trading service, but I have been watching [Vivos, Inc (RDGL)] for 10 years, as they are developing the first injectable brachytherapy device for cancer treatment.

Radioactive particles are incorporated into a hydrogel so that the gel is injected directly into the tumor, and the radioactivity stays in place and does not circulate throughout the body as a pharmaceutical does. They call it precision brachytherapy.

They are already doing clinical studies in India and plan to submit to the FDA at the end of March or the beginning of April. Mayo Clinic will do the clinical studies here in the USA.

They have been successfully treating pets for cancer for more than 5 years and have a former FDA reviewer as a consultant to give their IDE submission the final touches to help ensure approval.

– Richard A.

Hi Richard,

I took a quick look at Vivos (RDGL). Please understand that I can’t provide personalized investment advice, so my comments below are just general comments after objectively looking at the company for a relatively short period.

To your point, this is a tiny company. So small and insignificant, it has no analyst coverage.

Its therapy is to use Yttrium-90 in an injectable hydrogel brachytherapy. Brachytherapy is a type of internal radiation therapy whereby a radioactive substance is injected into a cancer tumor in the hopes of destroying cancer cells.

Vivos does have a product for veterinary use, IsoPet, but its revenues are absolutely insignificant.

Vivos is obviously trying to take this therapy, currently used for animals, and extend it to humans. It was filed with the FDA for its investigational device exemption (IDE) but was declined by the FDA after review in August 2025. It appears that Vivos will try again in April this year.

The problem with a company like this is that it has almost no cash at all. The only way it can survive is by continuously raising capital. And each time it does that, it dilutes existing shareholders, and there aren’t many. It has years ahead of clinical trials, assuming it gets a green light from the FDA, and no money to fund those trials.

Even if the company got lucky with a double in share price, it would have to turn around immediately and conduct a secondary offering just to get to the next milestone. This is a company that I have significant doubts about its ability to remain a going concern.

I wish I could be more positive, but that’s my take.

Thank you, Jeff!

I am so amazed at all your writings.

This new service is so timely, and I am looking forward to sending you a thank-you mail for my first successful trade here.

Also, it is so good to be a Brownstone Unlimited Member, knowing that you keep your word of adding new services to my account.

Your approach with sincerity and integrity for the whole organization makes me stand up and listen.

I will do more of it and try to shut out all the other noise.

Thank you again!

– Per T.

Hi Per,

It’s wonderful to have you as part of our community, and thank you for joining us as an Unlimited member.

I had a business call with a company yesterday, and the CEO of the company asked me a question along the lines of “when are you going to retire, and why haven’t you retired?” My answer was that I couldn’t imagine doing so right now. Developments in tech/biotech are the most exciting they’ve ever been, and we’re just at the beginning of extraordinary change. How could I possibly step back when we’re at the cusp of something I’m so passionate about?

The other reason I don’t have any interest in retiring is that my subscribers like you. You all motivate me every day to work hard and share my knowledge with you that I’ve gained over the last 40 years of study, research, travels, and real-world work in industry.

Nothing makes me happier than to hear that my subscribers find my work valuable and can grow their wealth using my research.

My name is on the business, and I take this responsibility seriously. And I’m fortunate to have an incredible team that helps me every day to deliver on our promise, produce incredible research that can’t be found anywhere else, stack the deck in the favor of our subscribers, and empower our subscribers with more knowledge and information, not just about investments, but about preparing for the future that is coming.

Thanks again.

We have so much to look forward to,

Jeff

Read the latest insights from the world of high technology.

There was some renewed excitement in the last few days about fourth-generation nuclear fission technology and the Department of...

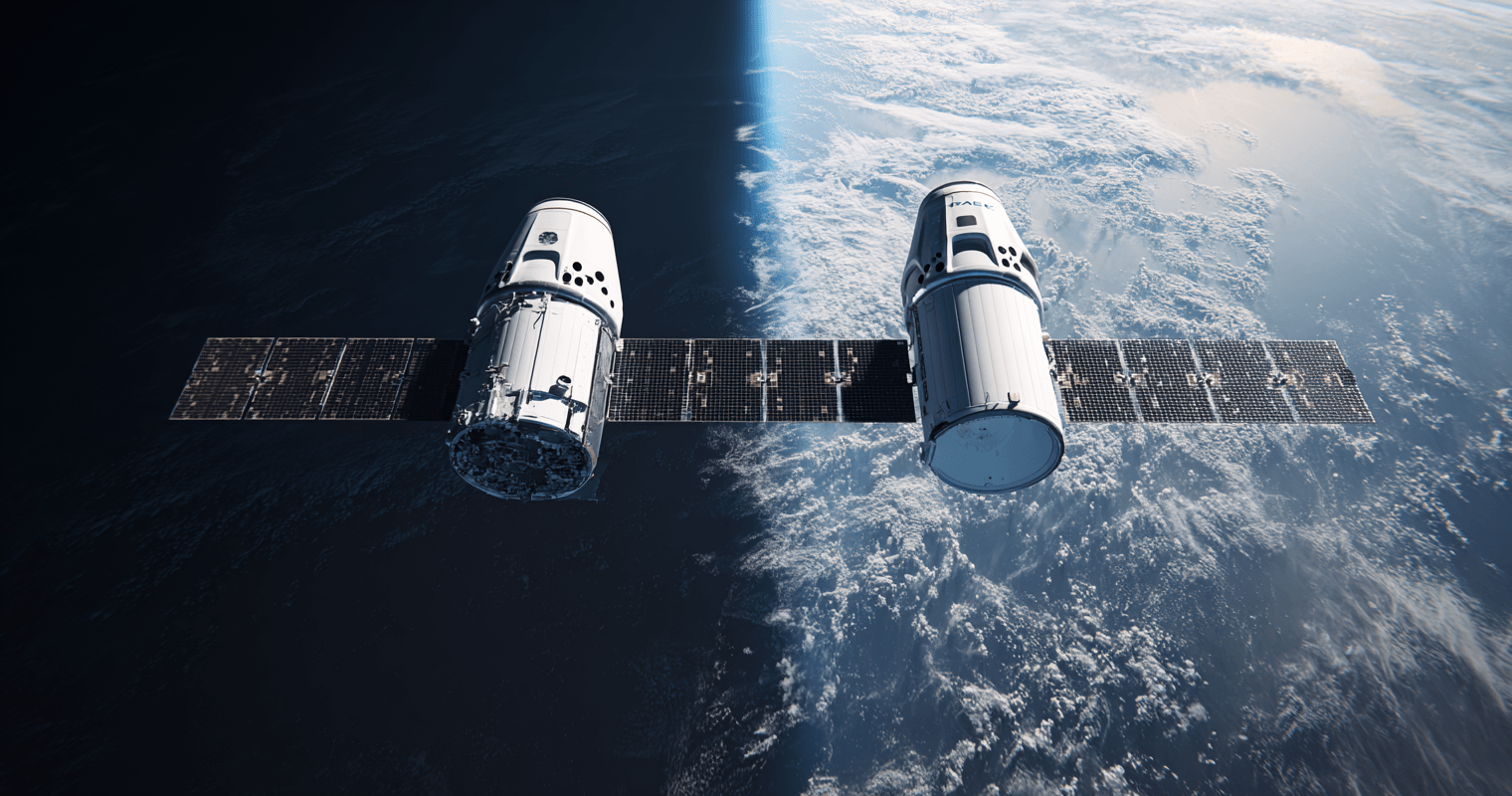

Early this month, Amazon (AMZN), through its Amazon Leo subsidiary, filed a petition with the Federal Communications Commission (FCC)....

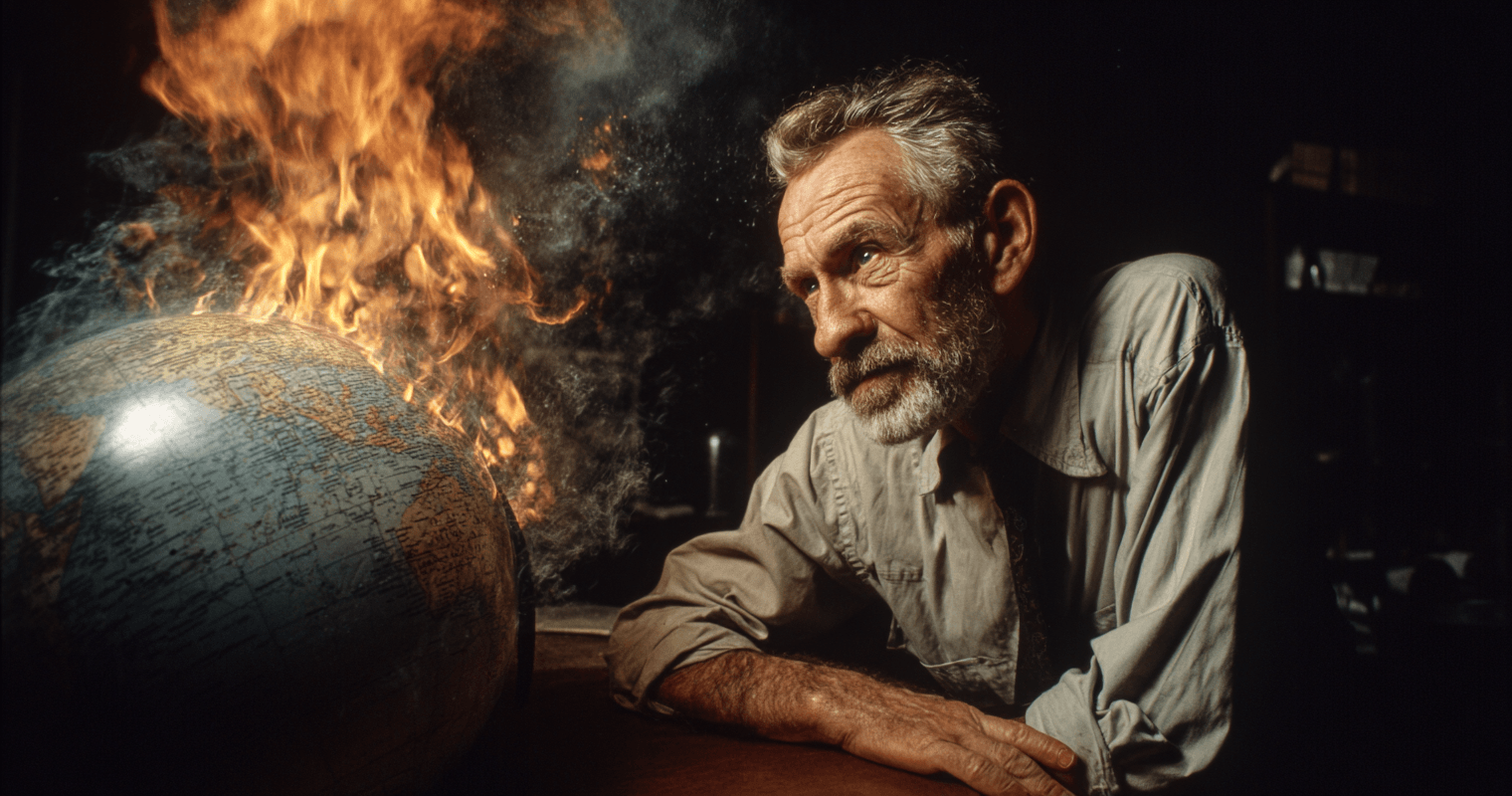

It’s hard to believe that anyone would listen to Paul Ehrlich at all after being so concretely proven to...

Thanks for signing up for The Bleeding Edge — we’re glad you’re here!

Want updates sent straight to your phone too? Sign up for SMS alerts to get the latest delivered via text.

Brownstone Research: By submitting your phone number, you agree to our SMS Terms & Conditions, Terms of Use, & Privacy Policy, and give express written consent to receive marketing text messages from BSR. Messages are recurring & frequency may vary. Reply STOP to 94703 to opt out. Reply HELP to 94703 for info. Consent is not a condition of purchase. Message and data rates may apply. For additional information, you may contact Customer Service at 888-512-0726 or smssupport@brownstoneresearch.com.