Esse Quam Videri

This case isn’t a silly “billionaire vs. billionaire” battle… It’s actually about the abuse of charitable trusts and non-profits...

Google’s Gemini AI model is out… But behind the flashy demo videos and stats, it’s not at all what we expected. But the bigger question here is whether Google is failing… or if AI development has hit a plateau.

Gemini is out. But it’s not all it’s made out to be.

On December 6, Google released its long-awaited AI model, Gemini.

Rumors of Gemini have been swirling this year. By all accounts, it should have outperformed OpenAI’s GPT-4 model.

According to Google, it did just that… But be careful taking Google at face value on that.

I’ve been digging into the developer notes from Google. And behind the impressive demo videos and stats is an ugly truth…

Gemini is a disappointment.

I’ll get into those details in a moment.

But the bigger question here is whether Google is failing… or if AI development has hit a plateau.

Google made three Gemini models available…

Ultra

Pro

Nano

So far, we only have access to Pro. This mid-range model is a stripped-down version of Ultra. That means I can’t test Ultra on my own. I’ll have to take Google’s word on how it performs.

Google’s demo video of Ultra showed off some impressive capabilities. Here’s a clip of it recognizing a game of rock, paper, scissors…

Demo of Gemini (Source: Google)

But that’s not how it works in real-time.

Google’s developer notes showed that it was manually prompted to describe each hand gesture.

It wasn’t until it was asked a leading question about all three gestures in one slide that it could guess the game.

When I first watched the demo video, I was blown away. I thought Gemini was making sense of real-time video. Its responses were snappy and creative.

But the developer notes show us that Gemini could only make sense of still images with text prompts. Even then, the responses were bland and generic.

Google tried using similar tricks to show off Gemini’s strengths.

Here’s a chart that makes Gemini out to be the strongest AI…

AI benchmark testing between Gemini and GPT-4 (Source: Google)

In this series of tests, human experts scored 89.8%. GPT-4 scored 86.4%. And Gemini bested them with a score of 90%.

But just like the demo video, the devil is in the details. Google only gave Gemini 6.4x as much training data as GPT-4.

That’s not the only unfair comparison. Google was testing Gemini against GPT-4. But OpenAI has an even better model, GPT-4 Turbo, that was released in November.

Third-party testers put GPT-4 Turbo’s score at about 89%.

That means Gemini Ultra is roughly on par with the latest GPT-4 model.

Don’t get me wrong. That’s impressive. But it’s a far cry from the predictions that Gemini would leave GPT-4 in the dust.

After all, Google is a $1.6 trillion company with $119 billion in cash and more than 182,000 employees to throw at AI development.

OpenAI was recently valued at $80 billion. It has only about 770 employees and far less cash.

Which makes Google’s inability to compete with – let alone outdo – OpenAI is startling.

But is this a Google problem… or a technology one?

Outside of Google, we’re seeing smaller firms push the boundaries on what’s possible with AI.

I recently highlighted breakthroughs in AI video generation from Pika.

Here’s a demo video from Pika…

Pika 1.0 generates video based on text prompts. Source: Pika.art

These videos aren’t free of flaws. But they mark a huge leap in progress.

The small, Paris-based AI firm behind Pika, Mistral, released its latest model which outperforms GPT-3.5. That’s impressive for a company with only 22 employees.

And OpenAI’s Q* model is reported to be able to reliably do grade school math. That may not seem very impressive… But it marks a major breakthrough in how AI models reason through problems.

The point of these examples is to show that big and small firms alike are making progress in AI.

And that means the failings of Gemini are a Google problem… not a technological one.

Now, it may seem like I’m ragging on Google a lot. But there’s a good reason for this.

You have to understand that I’m not going to blindly cheerlead companies during one of the most significant tech revolutions the world will ever see.

I like Google as a company. They’ve done great work with AlphaFold and AlphaMissense, which I’ve covered previously.

But they need to pick up the slack on AI development to stay competitive.

Google isn’t out of the race. But Gemini needs to thoroughly outperform GPT-4. Management can’t rely on fudged numbers and demos to win over the masses.

Gemini Ultra is still under development. Google estimates that it will launch in early 2024. Developers still have time to push the boundaries on what’s possible.

I look forward to thoroughly testing Gemini Ultra against GPT-4 and other models upon its release.

Regards,

Colin Tedards

Editor, The Bleeding Edge

Read the latest insights from the world of high technology.

This case isn’t a silly “billionaire vs. billionaire” battle… It’s actually about the abuse of charitable trusts and non-profits...

Many have argued that improved economics will make beaming solar energy down to Earth makes so much sense. But...

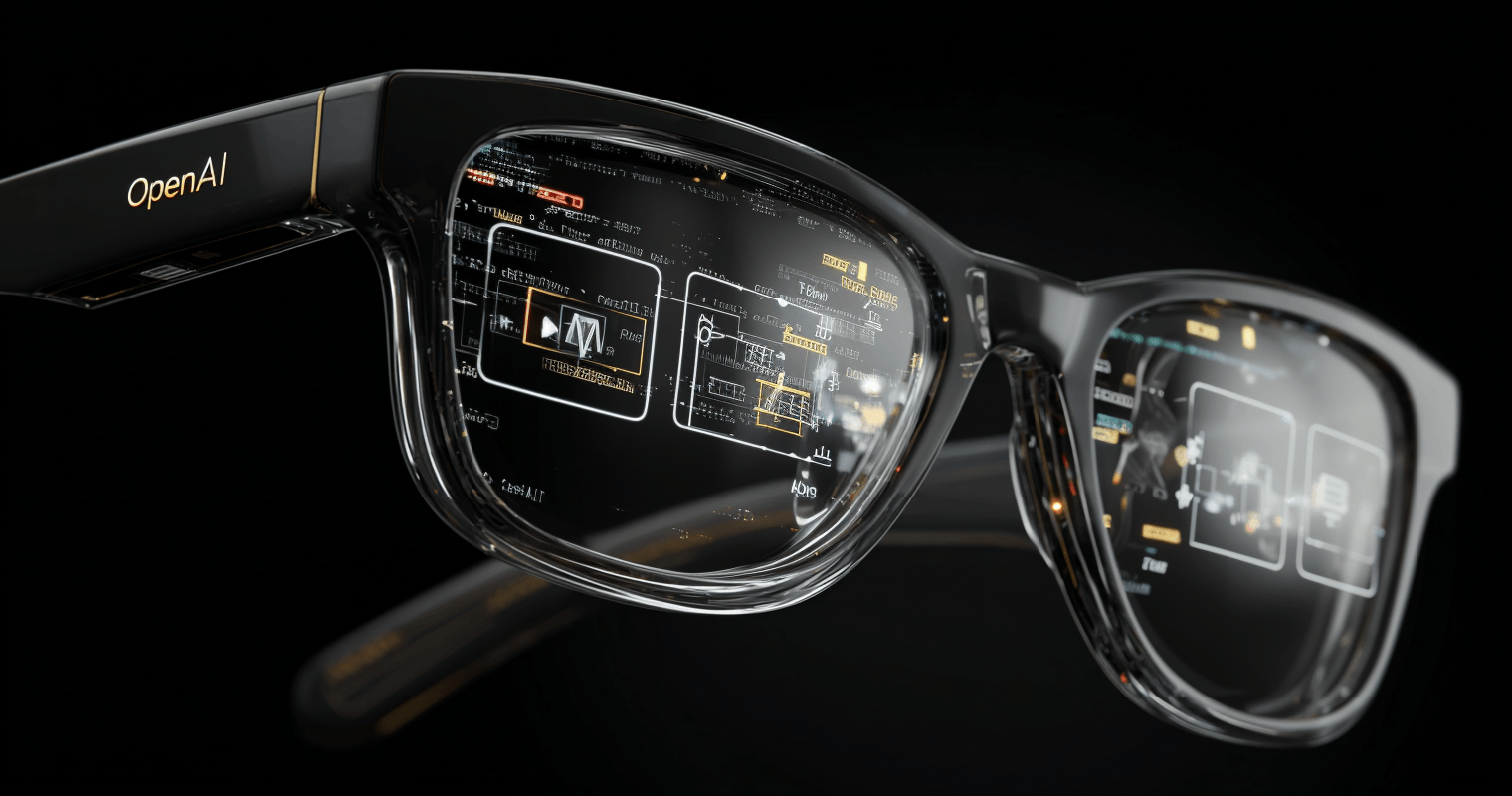

We’re seeing AI-first companies working towards supplanting Google’s and Apple’s smartphone operating systems…And one of the most visible pushes...