Musk Pulls the Trigger on the TeraFab

When Elon Musk stated he wanted to build his own semiconductor manufacturing plant, many assumed he was just bluffing…

If NVIDIA hits the scale Jensen Huang is signaling, we’re no longer talking about incremental growth in data center capacity. We’re talking about a step-function increase in global power demand.

One trillion dollars…

That’s the number NVIDIA CEO Jensen Huang put on the table at this year’s NVIDIA GPU Technology Conference known as GTC. He wasn’t referring to the market cap of his company, which is far beyond that level. He was referring to the amount of revenue he expects NVIDIA will earn from 2025 to 2027.

And that implies NVIDIA is on a path to generate roughly $500 billion in 2027 alone. Only Walmart (WMT) and Amazon (AMZN) have more annual sales than that.

This is an extraordinary figure. And the reason for this is simple… AI-driven demand continues to increase, just as we’ve been predicting at Brownstone Research.

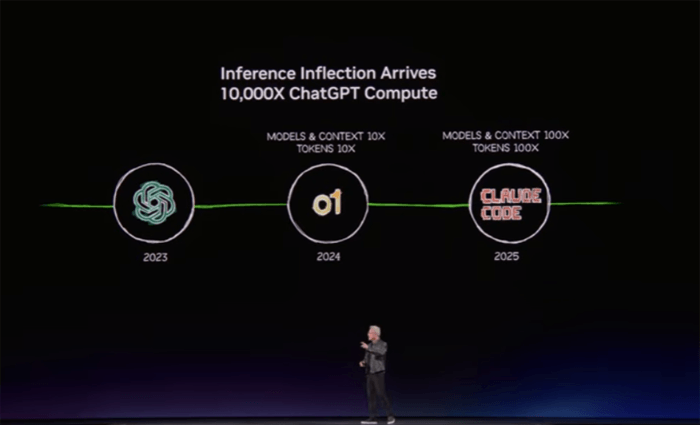

Since the release of ChatGPT in late 2022, NVIDIA estimates that token generation demand per AI task has increased 10,000x. At the same time, overall model usage has surged 100x. Together, that implies a 1,000,000x increase in compute demand in just a couple of years.

Huang doesn’t believe this is going to slow down either. Neither do we. Frontier AI model companies are clamoring for more compute. He said:

It’s the feeling that OpenAI has, it’s the feeling that Anthropic has. If they could just get more capacity, they could generate more tokens, their revenues would go up, more people could use it, the more advanced, the smarter the AI could become.

We are now at that positive flywheel system, we have reached that moment… the inference inflection has arrived.

It’s important to understand this quote. OpenAI, Anthropic, Google, xAI and Meta are all operating with the same mindset. If they can access more compute, they can scale their products, improve performance, and generate more value.

That’s why Huang described this moment as an inflection point. The industry has entered a self-reinforcing loop where demand for compute feeds on itself. The more capacity that comes online, the more it gets used.

What most investors still don’t appreciate is what comes next. We are moving beyond simple prompts and responses and into the era of autonomous AI agents.

These systems don’t just answer questions. They run workflows, write code, analyze data, and make decisions. And importantly, they operate continuously.

With the release of OpenClaw – the open-source agentic AI platform that Jeff’s been covering in The Bleeding Edge – it’s now easier than ever for individuals to program their own AI agents that will continuously do work for them.

Now OpenClaw is designed to run locally on a computer, but the reality is that meaningful workloads will still be pushed into hyperscale environments where compute can scale efficiently.

This shift matters because persistent, always-on AI systems consume far more compute than the first wave of chatbot-style interactions. Which means the next surge in demand will be even greater.

And it’s not just OpenClaw. Another leading player in AI software, Perplexity, just recently launched Perplexity Computer, which has the ability to leverage all frontier AI models. Rather than being open-sourced like OpenClaw, Perplexity Computer has been productized with platform security and ease of use in mind. No programming skills are required at all.

This brings us to the real constraint… Not chips. Not software. Not capital.

Electricity.

If NVIDIA hits the scale Jensen Huang is signaling, we’re no longer talking about incremental growth in data center capacity. We’re talking about a step-function increase in global power demand.

Let’s walk through what that actually looks like.

Assume NVIDIA reaches roughly $500 billion in annual revenue by 2027, driven primarily by its next-generation Vera and Rubin systems, alongside current Grace and Blackwell platforms.

At that scale, we’re looking at deployments on the order of millions of GPUs.

Using NVIDIA’s NVL72 architecture as a baseline, each rack contains 72 GPUs and 36 CPUs. To support that level of revenue, we arrive at roughly 125,000 racks – about 9 million GPUs – deployed globally.

Now consider the power draw. Each of these racks consumes approximately 200 kilowatts. Multiply that across the full deployment, and we’re already at 25 gigawatts of electricity demand just to run the compute hardware.

But that’s only part of the story.

Modern AI data centers require advanced cooling systems, networking infrastructure, and redundancy layers. When we account for total facility overhead using a typical power usage effectiveness (PUE) factor of 1.3, total demand rises to roughly 32 to 33 gigawatts.

And that’s just NVIDIA. Once we factor in AMD systems and the growing use of custom application-specific semiconductors for AI by hyperscalers, total demand quickly approaches 60 to 65 gigawatts.

That’s the true scale of what’s coming.

And this is where the problem becomes clear.

A majority of these AI data centers are being built on U.S. soil. Last year, the United States added about 53 gigawatts of new power generation capacity, the highest level since 2002. On the surface, that sounds like progress.

But it’s not nearly enough. Even more concerning is what kind of power we’re adding.

According to the EIA, roughly 65% of new capacity is coming from solar and wind, both of which are intermittent energy sources. Another 28% is going toward battery storage, which helps manage variability but does not generate new electricity.

When we strip all of that out, only about 7% of new capacity – roughly 6.5 gigawatts – is true baseload power capable of running continuously, the kind necessary to power AI factories.

And we’re adding only a fraction of that in reliable, always-on power.

Even under the most optimistic projections, we’re nowhere close to closing that gap. And historically, actual buildouts fall well short of what’s planned.

Yes, we can attempt to reroute intermittent energy toward less critical use cases and reserve baseload power for AI. But that’s a temporary fix, not a scalable solution.

New power is the biggest hurdle that Jensen Huang and NVIDIA face in realizing their lofty revenue targets. And this problem will get worse every year. NVIDIA’s growth doesn’t stop in 2027. Not even close.

Wall Street is already projecting another 20% growth in 2028, pushing revenue over $600 billion. If I were to make a bet, I’d take the over on that number.

And every incremental dollar of that growth means more electricity. This is the constraint Jensen is solving for.

He needs more than just faster chips. He needs a new deployment model for compute itself.

This is what made one of the most important announcements at GTC so easy to miss.

Huang didn’t just talk about next-generation systems for terrestrial data centers. He introduced something entirely different.

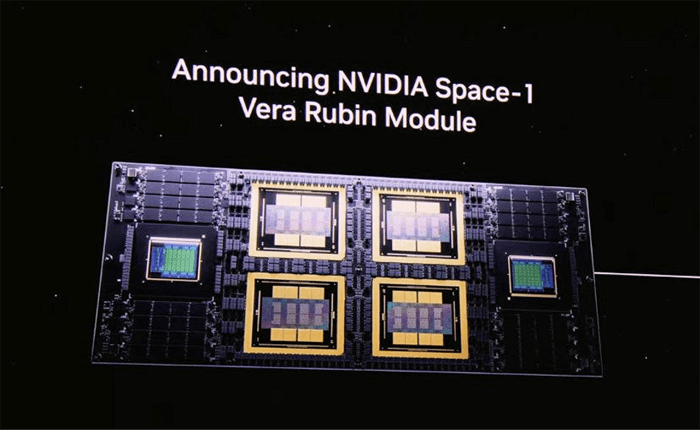

The NVIDIA Space-1 Vera Rubin module.

NVIDIA GTC Presentation | Source: NVIDIA

At first glance, it looks like a compact version of NVIDIA’s terrestrial GPU architecture. As we can see, the architecture pairs two Rubin GPUs with a single Vera CPU on a highly specialized board.

But that misses the point entirely. This system wasn’t designed for Earth. It was designed for orbital AI data centers.

Operating in space introduces a completely different set of challenges. Radiation constantly interferes with electronics, causing errors that can crash traditional systems. Instead of relying on slower, hardened chips, NVIDIA engineered around the problem.

The system runs duplicate computations across paired GPUs and compares the results in real time, instantly correcting any discrepancies. Combined with advanced error correction at the memory level, this creates a highly resilient architecture capable of operating in one of the harshest environments imaginable.

And despite those constraints, performance is staggering. The module delivers a 25x leap over prior generations, enabling real-time AI inference, autonomous decision-making, and even orbital training of advanced AI models.

A specific launch date has not yet been announced. But NVIDIA said six companies are already using its accelerated computing platforms to power next-generation space missions.

This is also why Elon Musk is looking towards outer space to expand AI data centers with his constellation of one million satellites.

In our latest issue of The Near Future Report, we said:

On Earth, building new power plants takes years. Transmission lines take even longer. Regulators slow everything down. Communities fight new infrastructure. And even when projects are approved, data centers compete for the same finite grid capacity.

But in low Earth orbit, those constraints disappear.

Approvals are easier to obtain. There is no NIMBY-ism fighting the construction. And no waiting years for utility hookups. Each AI satellite launches with its own power generation attached.

In space, solar panels generate about five times more electricity than they do on the ground. And they operate nearly 24 hours a day. Low Earth orbit (LEO) has no clouds, no inclement weather, and no night cycles when in sun-synchronous orbit.

That’s why Musk is thinking beyond terrestrial data centers.

And we are confident that Musk will achieve this.

Once Starship drives launch costs down toward $100 per kilogram – which we believe is achievable by 2028 based on the current trajectory – the economics of compute begin to shift in a way most investors aren’t prepared for.

At that point, orbital AI data centers will be the most cost efficient way to generate AI compute.

No land constraints. No grid bottlenecks. No cooling limitations. No permits to worry about. Just scalable, limitless free energy infrastructure operating in an environment purpose-built for exponential growth.

This is the moment the entire cost curve flips. And Elon Musk sees it coming.

That’s exactly why he’s now positioning SpaceX for a public offering. He wants to raise capital to accelerate his much larger vision.

Building orbital data centers isn’t just about launching satellites. It requires an entirely new supply chain from launch infrastructure to satellite components to advanced solar panels. And potentially even in-space manufacturing to power systems and next-generation compute architectures.

And Musk intends to build it all. This is a part of his AI masterplan.

Most investors are still focused on GPUs, software, and cloud providers. But the real shift is happening one layer deeper, at the infrastructure level.

More to come…

Regards,

Nick Rokke

Senior Analyst, The Bleeding Edge

P.S. Hi, Jeff’s managing editor here. If you want to learn more about this, Jeff recently put together a detailed presentation breaking down the opportunity in the new space economy and the ensuing infrastructure buildout…

He covers how this transition is unfolding, why it’s happening now, and most importantly, how to identify which companies are positioned to benefit as the data center buildout moves beyond Earth.

It’s important to position yourself now because the transition is already underway. And the biggest gains are made early, before the majority of investors realize the magnitude of this shift.

You can go here to access that presentation.

Read the latest insights from the world of high technology.

When Elon Musk stated he wanted to build his own semiconductor manufacturing plant, many assumed he was just bluffing…

While I maintain that the “AI Fear Trade” is largely overblown, that doesn’t mean there won’t be market shocks...

The more transactions that take place over Meta’s networks, the more money it makes. So why limit that to...

Thanks for signing up for The Bleeding Edge — we’re glad you’re here!

Want updates sent straight to your phone too? Sign up for SMS alerts to get the latest delivered via text.

Brownstone Research: By submitting your phone number, you agree to our SMS Terms & Conditions, Terms of Use, & Privacy Policy, and give express written consent to receive marketing text messages from BSR. Messages are recurring & frequency may vary. Reply STOP to 94703 to opt out. Reply HELP to 94703 for info. Consent is not a condition of purchase. Message and data rates may apply. For additional information, you may contact Customer Service at 888-512-0726 or smssupport@brownstoneresearch.com.