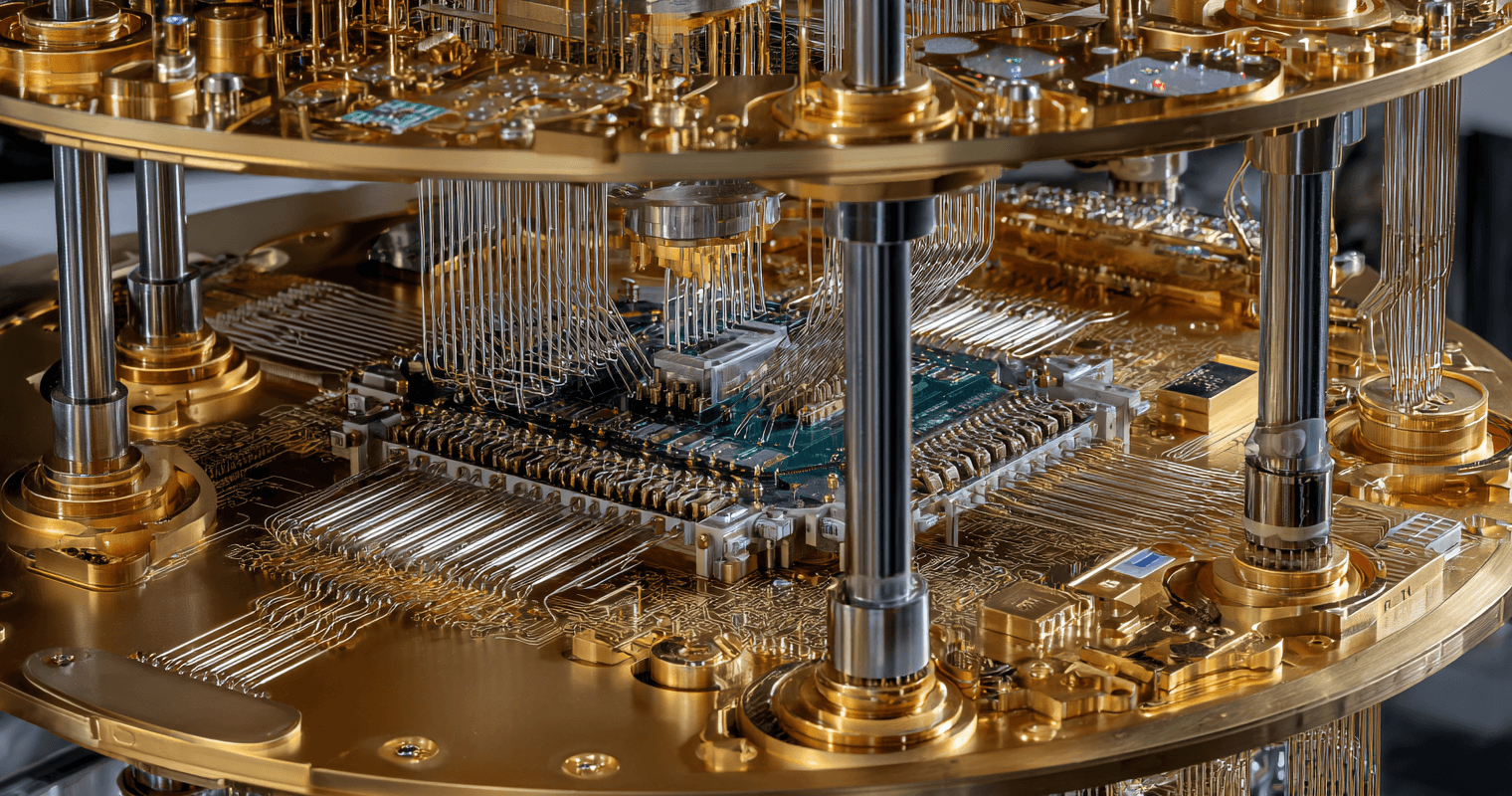

The Next Quantum Milestone

Last week brought some excitement to the quantum computing industry… The developments were a welcome relief after an excruciating...

By all accounts, Mythos is the model that looks to be a major step up from anything Anthropic has released to date. But that’s not why it got so much attention.

Late last week, one of the leading frontier AI model companies – Anthropic – released its latest version of its leading AI model – Claude Opus 4.7.

It can be hard to keep up with all the latest developments and releases of leading frontier AI models these days.

They are happening every month now.

The Opus 4.7 release was largely unremarkable.

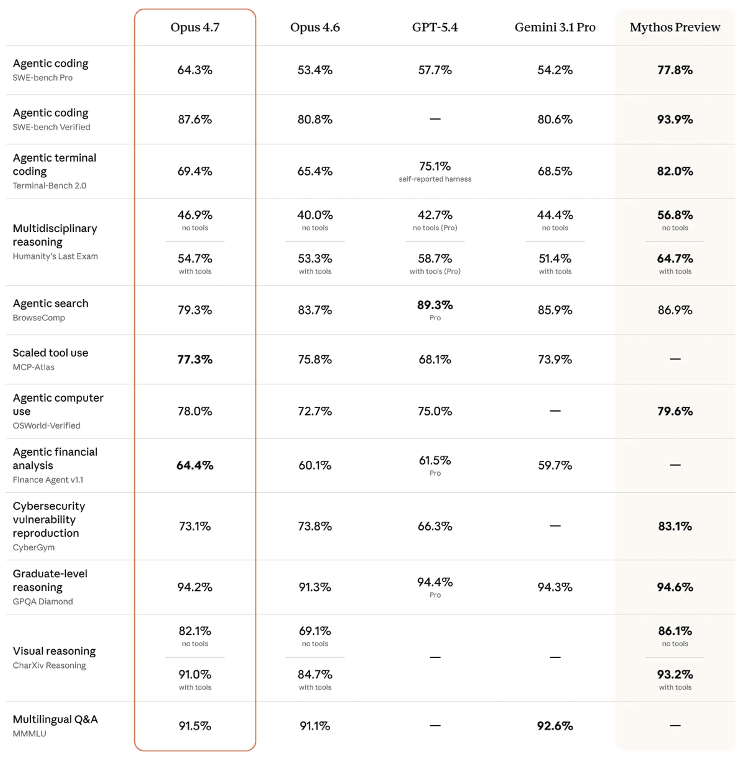

Its benchmark scores, highlighted in the left column below, showed modest improvements in all but two categories compared to its predecessor, Opus 4.6.

Source: Anthropic

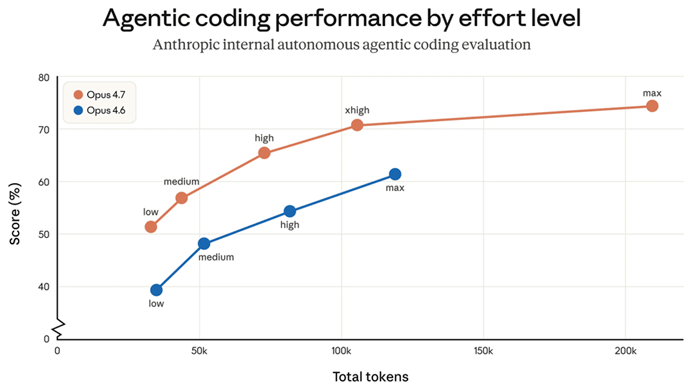

Anthropic positioned Opus 4.7 as better at software engineering, an area that Anthropic has been leading the industry in.

It also indicated that Opus 4.7 is even better at accomplishing real-world tasks, as evidenced in the chart below showing the improved performance of its agentic coding by computational effort.

Source: Anthropic

While not a breakthrough, there are still some impressive changes.

But the announcement was completely overshadowed by news of a far more powerful AI model that Anthropic has been developing in the lab.

That development is now known as Claude Mythos.

An internal memo from Anthropic was leaked in late March concerning Mythos, in which Mythos is spoken of as a “step change” in AI performance.

Those are big words that imply serious implications.

Whispers of artificial general intelligence (AGI) surfaced.

So did negative comments from naysayers, suggesting that it was just a marketing ploy, that Anthropic doesn’t have access to enough computational resources to get there… and that the company needs to raise additional capital.

Ironically, both positions can be accurate at the same time.

After all, the end game isn’t AGI. It’s artificial superintelligence (ASI).

AGI, from my analysis, is already here. It’s just not widely used.

It’s primarily in the AI laboratories, being refined and being shared with a small number of customers and government agencies.

AGI is what gives AI companies and investors the confidence and commitment to lean in, swing harder, and commit to ASI.

After the leak of Mythos in March, there wasn’t anything that Anthropic could do to suppress the now-public knowledge of the model.

So it went so far as to provide a peek at the capabilities, which are shown in the benchmark table above as “Mythos Preview” shown in the column on the far right.

The Mythos Preview benchmark scores are not only notably higher than Opus 4.7 in all categories, but they are also significantly higher than OpenAI’s GPT 5.4 – in all but one category.

Now, to be clear: The Mythos model is not yet generally available to the public. It’s still in the lab and available to a small cohort of early-access users.

By all accounts, Mythos is the model that looks to be a major step up from anything Anthropic has released to date.

But that’s not why it got so much attention.

As it turns out, Mythos is extremely good at cybersecurity.

More specifically, it can be leveraged as a powerful tool that can independently and autonomously find and exploit vulnerabilities in software and networks.

In the wrong hands, it could be an absolute nightmare.

Which is why it has been positioned as an AI that is “too powerful for public release.”

As an analyst, I find the timing of the leak to be highly suspicious.

The tech is real, but there is a game being played by Anthropic. These events reveal Anthropic’s agenda.

After all, in early March, the U.S. Department of War (DOW) deemed Anthropic to be a supply chain risk.

That came as a result of Anthropic’s politics and insistence on dictating how its software can and cannot be used by the U.S. government.

Anthropic’s AI models are very well-known for being programmed with bias on many issues, which creates inherent and sometimes unknown risks in the outputs of these models.

Designating Anthropic as a supply chain risk was a reasonable stance for the DOW to take.

This set Anthropic on a path of a series of actions as a response to the blacklisting by the DOW, including:

What’s interesting here is that while the DOW blacklisting of Mythos means that Anthropic’s technology is excluded from DOW contracts, it still allows other government agencies to work with and evaluate the technology.

And it appears that is exactly what is happening.

The National Security Agency (NSA) is working with Mythos to stress test its own systems against future cyberattacks.

In the right hands, a powerful tool like Mythos can enable governments, companies, and even individuals to harden their IT networks against bad actors.

The realities of Mythos’ newfound capabilities reveal a stark reality of the world we have just entered.

That being:

The above is almost certainly why Anthropic has not released Mythos to the public, and why it is allowing the NSA and Project Glasswing partners to get an early look to prepare for the deluge of cyberattacks to come.

The news has been well-received by the markets for cybersecurity companies.

CrowdStrike, for example, fell 37% from November through February on the belief that Anthropic would become a competitor rather than a partner.

And since Glasswing was launched, the stock has bounced about 20%.

1-Year Chart of CrowdStrike (CRWD)

Perhaps the most visible indication that what Anthropic has is real is the announcement yesterday that Amazon (AMZN) will be investing up to another $25 billion in Anthropic.

This is on top of $8 billion that it has already invested in the leading AI company.

Part of the deal with Anthropic also stipulates that Anthropic will spend more than $100 billion on Amazon Web Services over the next 10 years.

This is real money, with limited leverage (debt).

Amazon not only has the cash for investment… it has most of the cash for the $200 billion worth of CapEx that it will spend primarily on AI infrastructure this year.

It’s also why Anthropic needs to access the public markets this fall.

It has raised about $61 billion to date, plus an additional $5 billion in the short-term from Amazon, with as much as $20 billion more to follow.

But that’s just for Anthropic’s near-term goals.

Getting to ASI is a whole other story. That will require a massive capital raise in an IPO, which is currently targeted for October this year.

Jeff

Read the latest insights from the world of high technology.

Last week brought some excitement to the quantum computing industry… The developments were a welcome relief after an excruciating...