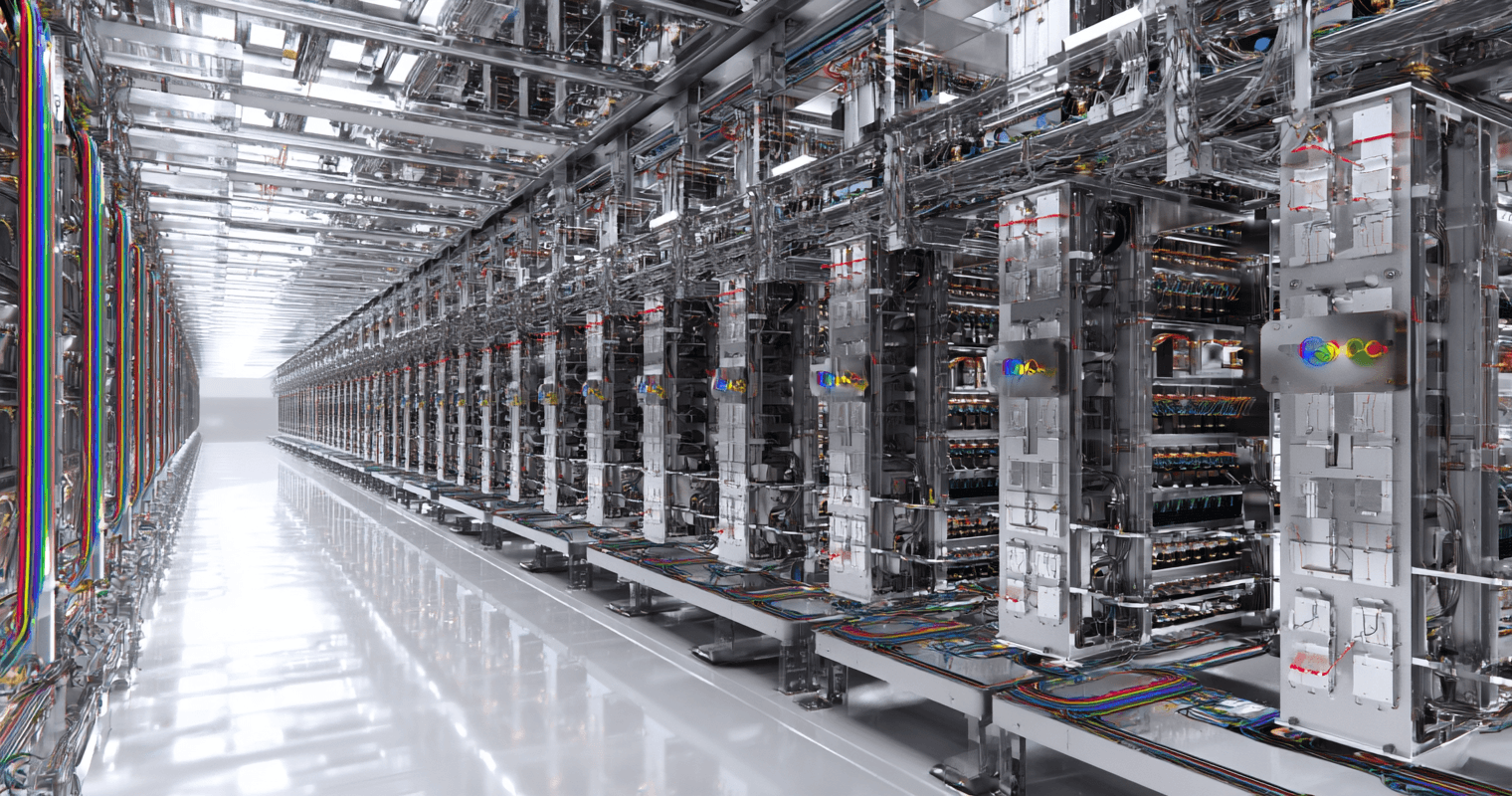

Google’s Pod Power

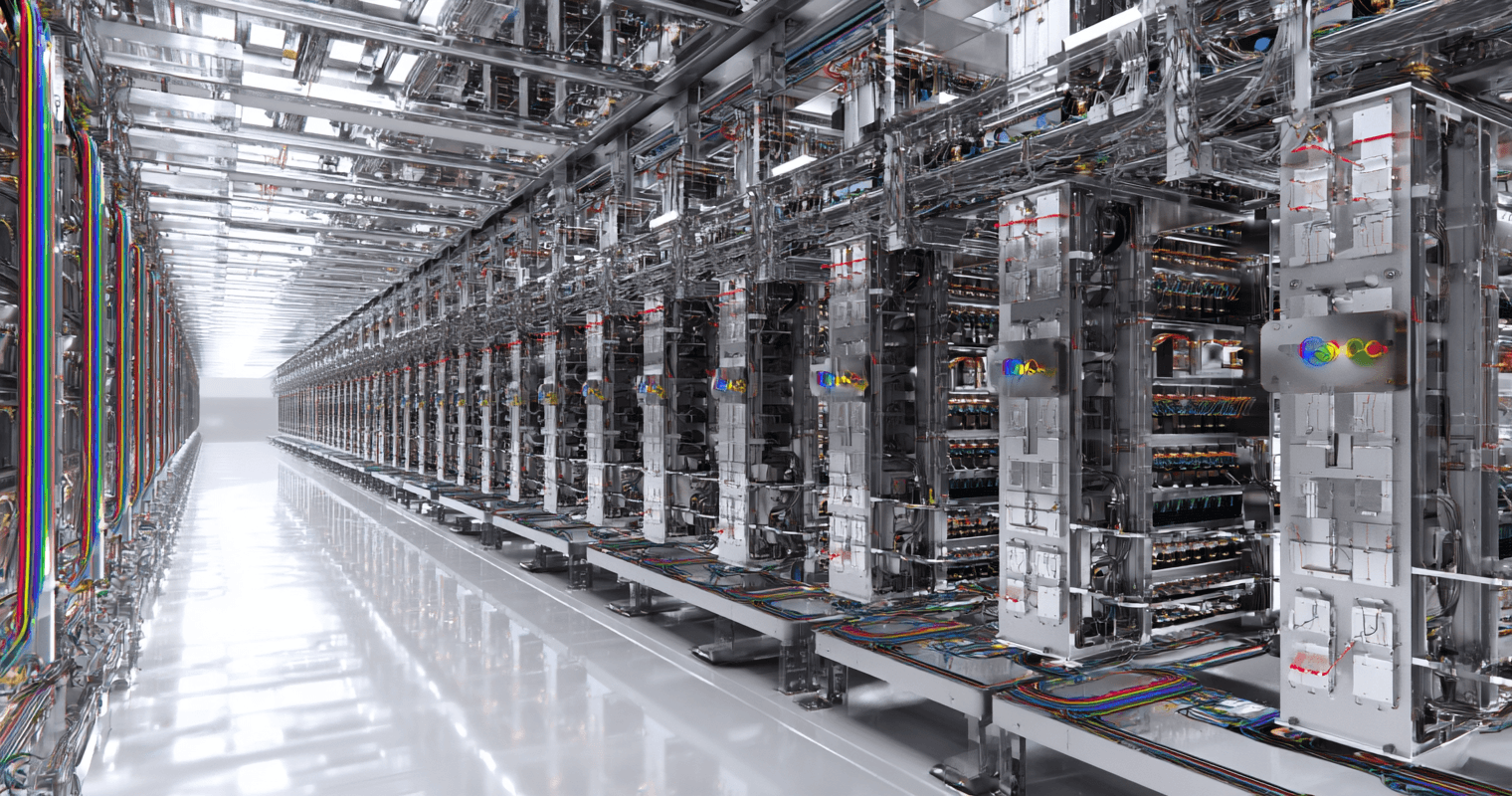

The biggest announcement of the week was about Google’s 8th generation of its TPUs, short for Tensor Processing Units.

Early today, Tesla announced that mass production of its fully autonomous Cybercab has begun…

Managing Editor’s Note: There’s a structural problem with the AI buildout, and it’s built so deeply in the framework that it could paralyze some of the biggest names in AI.

But there’s a 10X convergence set to solve this structural problem and shift the paradigm of the AI market completely, leaving the old guard of AI behind as a crop of newcomers takes their place at the bleeding edge of AI.

And there’s one private company that holds the technology at the core of this 10X convergence, essentially giving it a virtual monopoly on the next era of AI.

Jeff is sitting down next Wednesday with Marc Chaikin – developer of the world-famous Power Gauge System – to discuss the details and help people prepare ahead of the IPO.

You can go here to add your name to the guest list to join them on April 29 at 8 p.m. ET.

It has begun…

Early today, Tesla announced that mass production of its fully autonomous Cybercab has begun. As a reminder, the Cybercab has no steering wheel, no brake pedal, and no gas pedal.

It is a fully autonomous two-seater designed to completely redefine public transportation.

A Cybercab can literally drive itself off the production line and onto the streets and get straight to work. It is a concept that is completely foreign to our “normal” view of car production and operation.

Source: Tesla

I seriously doubt that Tesla would be producing these Cybercabs if it didn’t have the confidence or distribution to put them to work. Its Robotaxi network has expanded to Dallas and Houston now, and I am certain this is just the beginning of a major rollout this year.

I can’t wait to take my first ride in a Cybercab in the weeks ahead.

Here’s to our autonomous future,

Jeff

Jeff, you are neglecting the biggest player in the quantum computing game. The player with the most qubits and the best software and quantum simulator. As usual, it’s IBM. You seem to be missing its quantum computing capability in favor of the little guys. I think it is now operating at over 400 qubits, among its other features.

– Jim C.

Hi Jim,

You’ll probably be surprised to hear that I’ve been tracking the research and development at IBM (IBM) in quantum computing for more than a decade. I am very bearish on IBM in general, and also with regard to its quantum computing initiative, which is why I haven’t written much about it at all.

To be fair, and to your point, if we were to define the amount of R&D spend on quantum computing as the key metric for being a “big player” in the field, I would agree with you that IBM is one of them.

But if we were to define “big player” by the companies that are having the largest success commercializing their quantum computing technology, then IBM wouldn’t be a major player, which is why I haven’t been very interested in IBM’s quantum computers.

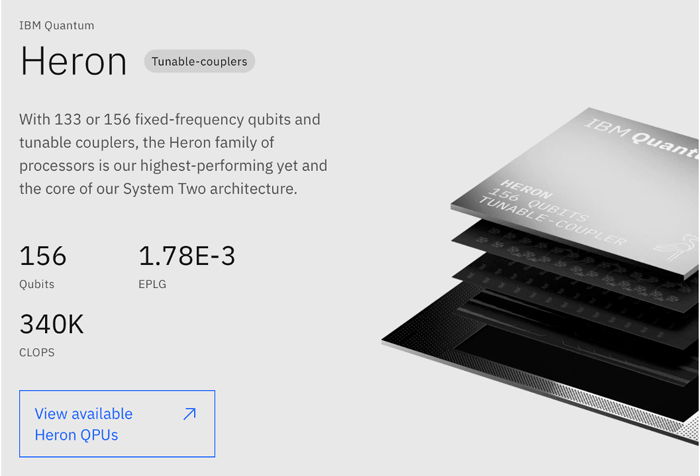

IBM’s approach to quantum computing is a superconducting quantum computer. Its most advanced superconducting quantum semiconductor is its Heron, which comes in either 133 or 156 physical qubits.

Source: IBM

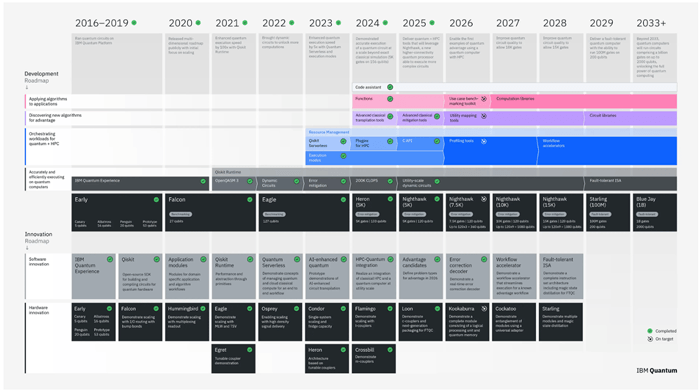

IBM won’t manufacture its 200-qubit quantum semiconductor Starling until 2029, and its 2,000-qubit semiconductor Blue Jay is forecasted out to 2033+.

If you’d like to see IBM’s full roadmap for quantum computing, you can see it right here. Below is the roadmap, but it is small and probably difficult to read.

Source: IBM (click here to expand)

To date, one of my criticisms of IBM’s quantum computing program has been a primary focus on hardware.

However, when pursuing superconducting quantum computers, the software is arguably more important – specifically, quantum error correction software.

This is where IBM is very weak. It is also why I have consistently highlighted Google/Alphabet (GOOGL) as one of the major players. Google’s quantum computing technology has been leading in quantum error correction software and systems.

IBM’s roadmap has seen a lot of slippage over the years. It continues to lag behind the industry in actual performance. And it has made up marketing terms like “quantum volume” in an effort to make its own system performance look better than the rest of the industry. For me, this is always a warning sign.

IBM refuses to release the details on the commercial adoption of its quantum computing products. It doesn’t do this because the reality is that IBM has struggled to commercialize its quantum technology.

This is a tired trend of IBM. The company has interesting research and development initiatives, but it struggles to commercialize and become a leader in the field.

Google does not have that problem, nor do the other quantum computing companies that I have written about in the past.

And aside from its quantum computing initiatives, IBM is saddled with almost $70 billion of debt and is being out-innovated in the fields in which it is competing. Growth is stagnant, and most of its business will be radically disrupted by bleeding-edge companies employing artificial general intelligence (AGI).

I hope that provides some useful context.

I’m curious to hear Jeff’s comments about this LinkedIn post that some US states are considering banning data center construction.

- 12 US states are considering bans

- $156B in projects stalled or scrapped

- Maine just passed the first state moratorium

– George B.

Hi George,

There has definitely been a lot of fearmongering about this topic in the last few months.

We have to wonder what their objective really is.

The decels literally want to impede progress. A population that has access to an abundance of products and services, a higher quality of life, and more freedom is much harder to control.

When things are great, it is much harder to pass through massive spending bills that can be used to defraud taxpayers. We’ve really learned a lot in the last year concerning the scale of these frauds and how systemically (i.e., not isolated) they have been designed and implemented.

And then there are those who claim to oppose the “environmental destruction” from the data centers, while at the same time are perfectly fine with golf courses. The context here is important. In 2023, data centers consumed about 6.4 billion gallons of water. It sounds like a lot, and it is, but data centers use closed-loop cooling systems where the water is reused after it cools down.

By comparison, golf courses in the U.S. use about 547 billion gallons of water a year. And it’s not a closed-loop system. Golf courses need that much water every year. Data centers only use about 1% of that one time.

Obviously, water utilization will grow with more data center construction, but it will always be a tiny, tiny percentage of golf course consumption.

Now, at a very high level, 40–50% of all data center plans have been delayed or canceled. The primary reasons for that have been access to power and/or permitting.

It does look like Maine will move forward with its bill to temporarily ban AI data center construction until November 1, 2027. But it is just that – a temporary ban.

As for the remaining 11:

As we can see above, there may be a lot of noise coming from both sides of the aisle, but there is almost no substance or traction whatsoever.

These data centers create jobs and economic growth in states, which is why, except for Maine, they are being welcomed.

Legislation won’t be the bottleneck. Access to power production is the key problem. It will define when and where data centers will be built.

Hello, question for Jeff and others: PCmag had an article – Malware Is Sleeping on the Blockchain, and It’s Already Infected Dozens of Global Targets – and it is very worrisome to me. Once it’s embedded in the block, it can’t be removed. Do you have a perspective on this?

– Charlene R.

Hello Charlene,

This is a very interesting story that is actually very technical in terms of how the cyberattack was implemented, so we’ll have to break it down to better understand it.

At a high level, the idea of malware “sleeping” on a blockchain gives the connotation that anybody sending a transaction or connecting to a blockchain application might get their passwords stolen.

The reality is quite different.

Blockchains are fundamentally a ledger of transactions over time. Which means we can query public blockchains to look at transactions at various points in time. They are databases that store information in a globally distributed manner.

Most blockchains are decentralized and resilient to being taken down or altered. This means any malicious code that gets stored within transaction data can be queried from the chain as long as it is up and running. For a chain like Ethereum, that’s 100% uptime for more than a decade.

This makes it an ideal place for malicious code to live since hackers or government officials can’t simply take a server down.

The cyberattack that uses malware discussed in the article is a bit complex. It involves storing what’s called a “pointer” within transaction data, which is easier to do on low-fee chains like Tron or Aptos. The pointer is not the malware. It simply “points” to a certain transaction on another chain, in this case, the Binance Smart Chain, where the actual malware payload sits.

The payload – the lines of code that hold the malware – sits in the data that’s pulled from the transaction. It starts to run after it gets decrypted by the person who requested the data.

The malware is quite dangerous. It can steal passwords from more than ten different password managers, comb through browser data to find stored logins and cookies, and can infiltrate more than 60 wallet extensions and more.

It’s a nasty piece of code that runs silently on your computer.

But here’s the thing…

This type of attack is not just sitting on the blockchain in such a way that everybody who interacts with the chain gets infected. It’s usually targeting freelance developers.

The developer will download a software job request via GitHub. Then the developer starts the job by looking up the data using blockchain remote procedure calls (RPC), which is a technical job that 99% of blockchain users don’t know how to do, nor do they really need to know.

The unfortunate reality for developers is that these sorts of malicious job offers happen frequently. They must always be on the lookout for malicious code in GitHub repos or even files sent to them directly.

It’s a legitimate threat, but not one that is dangerous for non-developers using blockchains for normal transactions. We’d never pull code resulting in the download of malware onto our computers when normally transacting on blockchains.

I hope that alleviates your concerns.

Thank you to everyone for writing in. As always, you can reach my team or me right here with any questions or concerns. I can’t respond to every email, and I can’t give personalized investment advice, but I do read everything that comes in and enjoy hearing from you all.

Have a great weekend.

Jeff

Read the latest insights from the world of high technology.

The biggest announcement of the week was about Google’s 8th generation of its TPUs, short for Tensor Processing Units.

Amidst all the excitement of the Artemis II mission, most people completely missed the new directive that was announced,...

By all accounts, Mythos is the model that looks to be a major step up from anything Anthropic has...